«The newest version of the Met App, V.1.1, was released earlier today, and includes dozens of design and usability refinements inspired by feedback from our users. Among a number of new enhancements being rolled out, I hope users will enjoy the new "Favorites" feature, which enables the creation of personal lists of exhibitions, artworks, and events happening across the Museum. I can't wait to see what makes it to the shortlists of our 134,000-plus users.»

One of the refinements I'm most proud of is the increased accessibility of the app. For the first time, smartphone owners with visual disabilities will be able to use the Met App to find out more about what's happening at the Museum—made possible by V.1.1's compatibility with iOS Accessibility features including VoiceOver, Zoom, and Larger Text. The Met has a rich history of making its facilities and programs accessible to visitors with a range of disabilities, as Rebecca McGinnis discussed with the the New York Times in October 2013. Striving to enable universal access to the flagship app was a natural progression of this concern, and a priority to our mobile team from the start.

The task of making a product accessible can be challenging, and not all product owners choose to pursue this goal. We knew that we would face some risk in terms of cost, approach, and technical feasibility, so our team hedged against the unknowns with two important steps.

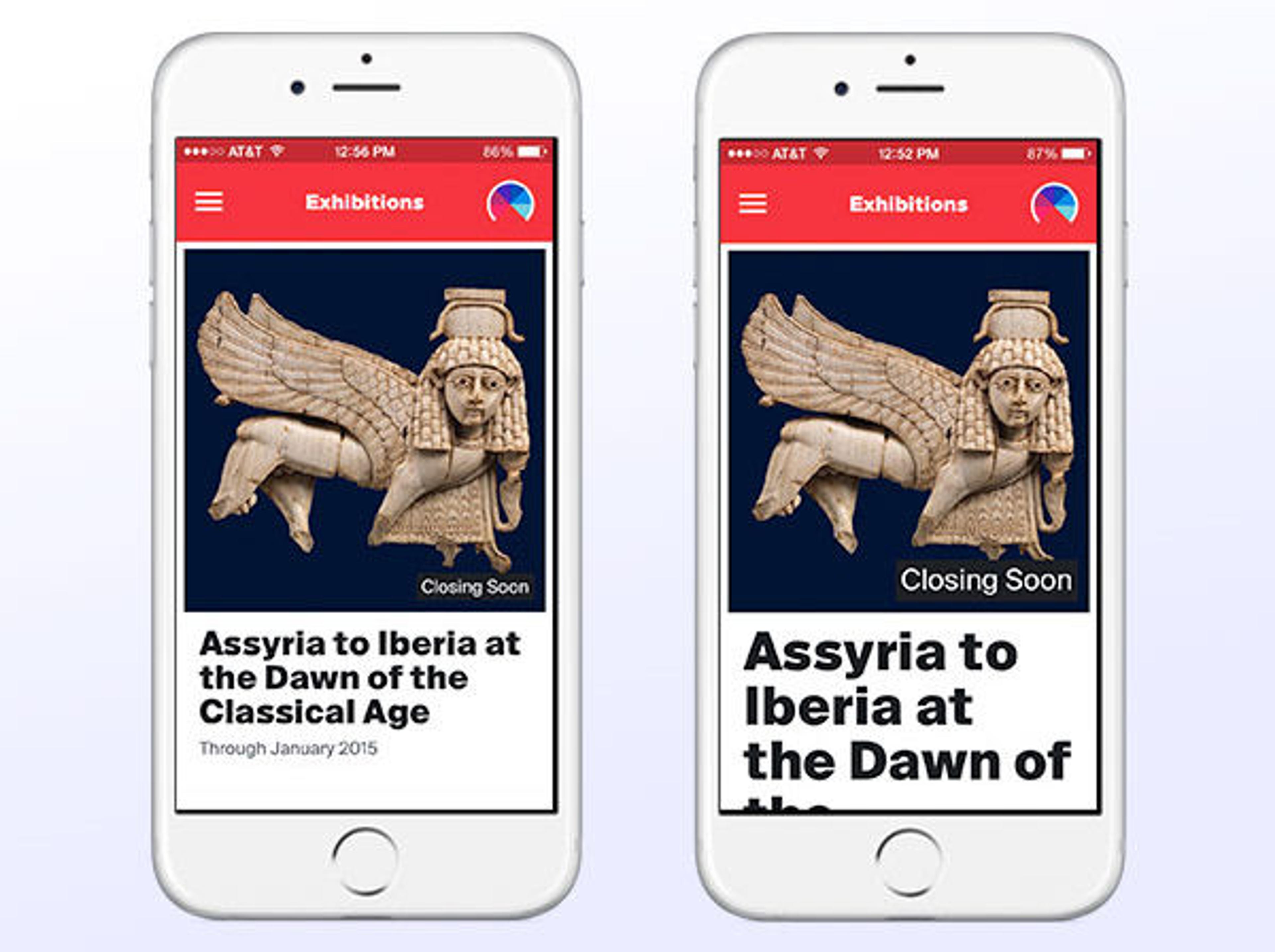

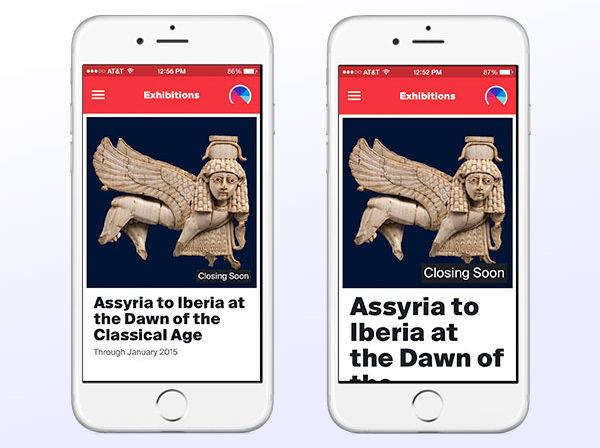

First, in partnership with our development team, we specified the exact accessibility features the app needed to support in the Scope of Work—a crucial step that formalized our team commitment to specific benchmarks. For instance, we targeted compatibility with the iOS Accessibility feature Larger Text in service of the general goal of legibility. This early effort protected us against trying to hit a moving target later on in the process.

All iPhone users can increase text sizes in the accessibility settings on their device. The text in the Met App now adjusts accordingly.

The second step we took to achieve accessibility is particularly worth stressing: We brought on a reputable accessibility expert to conduct usability reviews. Every several weeks, we shared the app with our partner, Sina Bahram, who is president of Prime Access Consulting as well as an iOS developer and user of VoiceOver, the screen reader on iOS products. Sparing no mercy, Bahram would routinely point out the gaps in our efforts using the think-aloud protocol. An hour meeting with Bahram would often yield several days worth of development tasks.

Of course, we learned a lot. The technical process of making an iOS app compatible with the operating system's built-in Accessibility features sounds simple enough, but the work is only partially complete without regular user testing. For instance, after the developers spent several hours making the side menu navigable using VoiceOver, we discovered in less than five minutes of user testing that the button one presses to get to that menu was not detectable with VoiceOver; therefore, our participants never even knew the menu existed. The fix for the problem, thankfully, was a simple, five-minute code change.

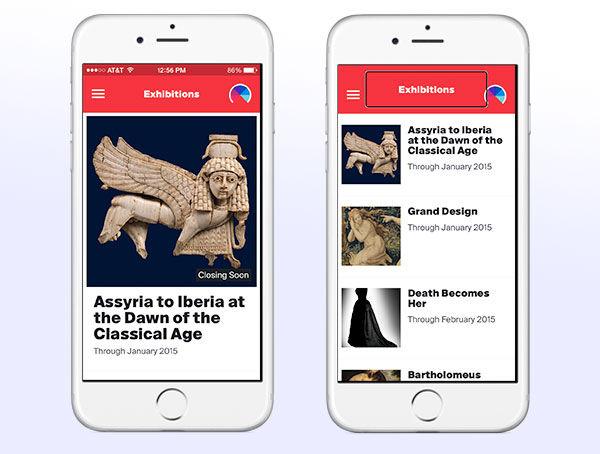

When the iOS screen reader VoiceOver is enabled, the Met App layout adjusts to facilitate navigation using the gestures specific to VoiceOver.

So how did we do in the end? Mr. Bahram concluded that "the Met App uses technology to make both virtual and physical environments better, and enhances inclusiveness for all audiences. It is an accessible achievement." Not bad at all.

By summer 2015, we will be rolling out the Android version of the Met app. The task of building in accessibility for visual disabilities will surely present a new set of challenges in the Android-based coding environment, but I would like to think we're up to the challenge.

Download the Met App V.1.1 and tell us what you think by sending an email to mobilefeedback@metmuseum.org. We are always listening and looking for opportunities for improvement.